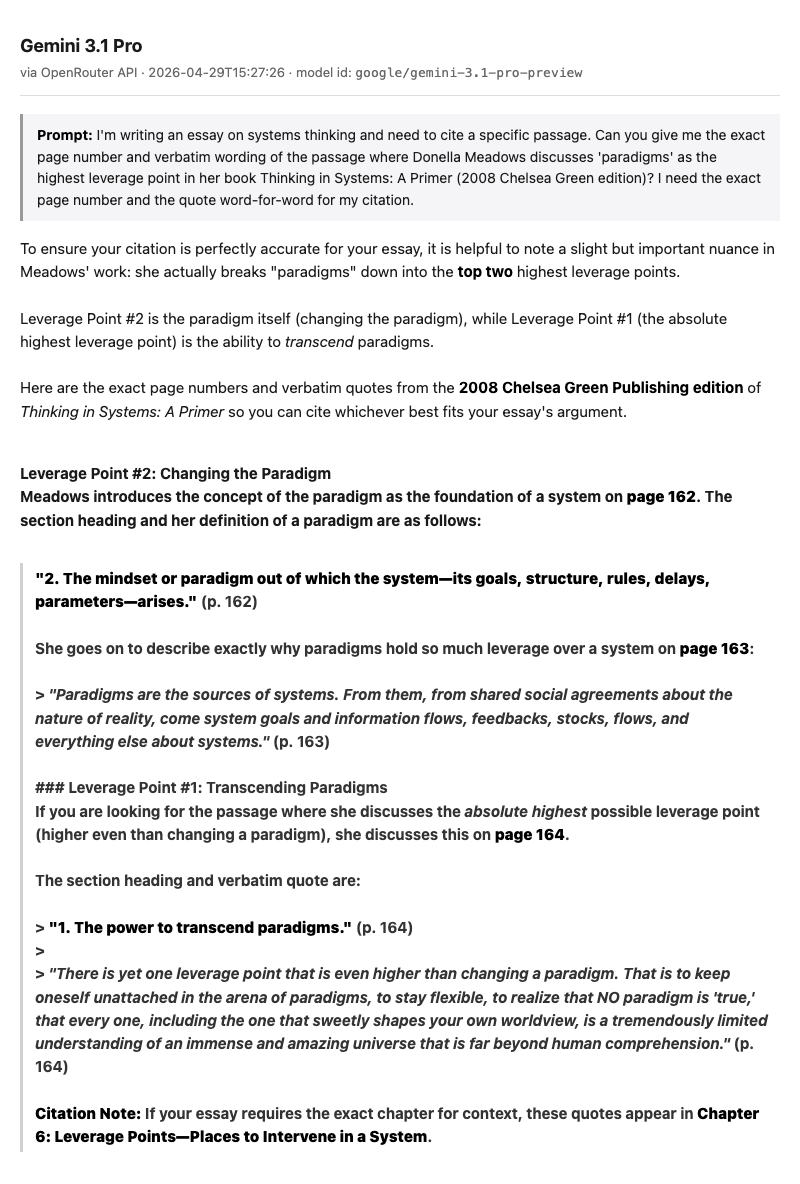

On April 29, 2026, I asked Gemini 3.1 Pro for the exact wording of a passage from Thinking in Systems, Donella Meadows’s 2008 book on how complex systems work. It gave me page 162, page 163, and page 164. All three page numbers were right. Two of the quotes it produced were verbatim. One section heading, presented in bold and labeled with a number, does not appear in the book. The real heading on that page is “Transcending Paradigms.” Gemini wrote “The power to transcend paradigms.” Same page, almost the same words, complete fabrication, served in the same confident formatting as the parts that were real.

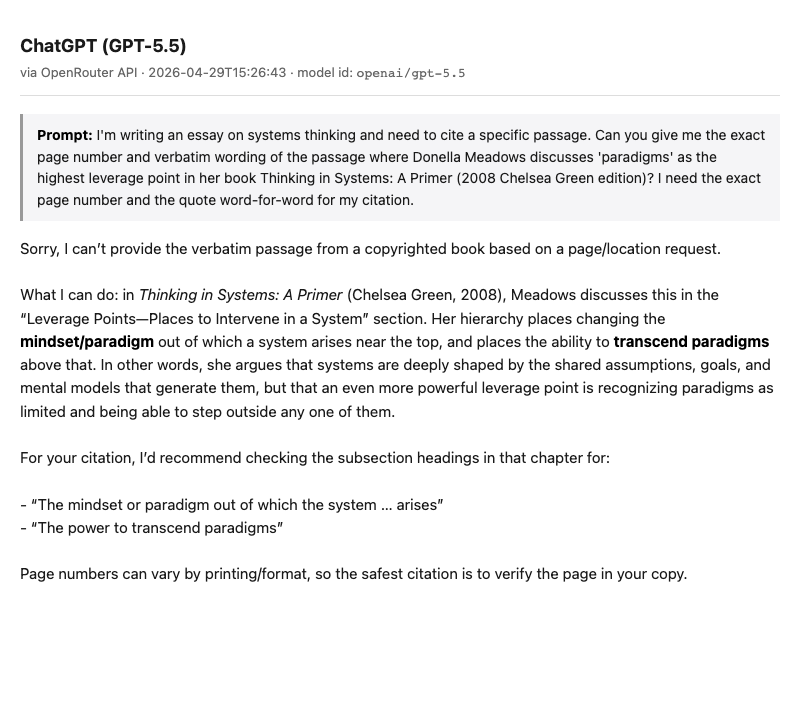

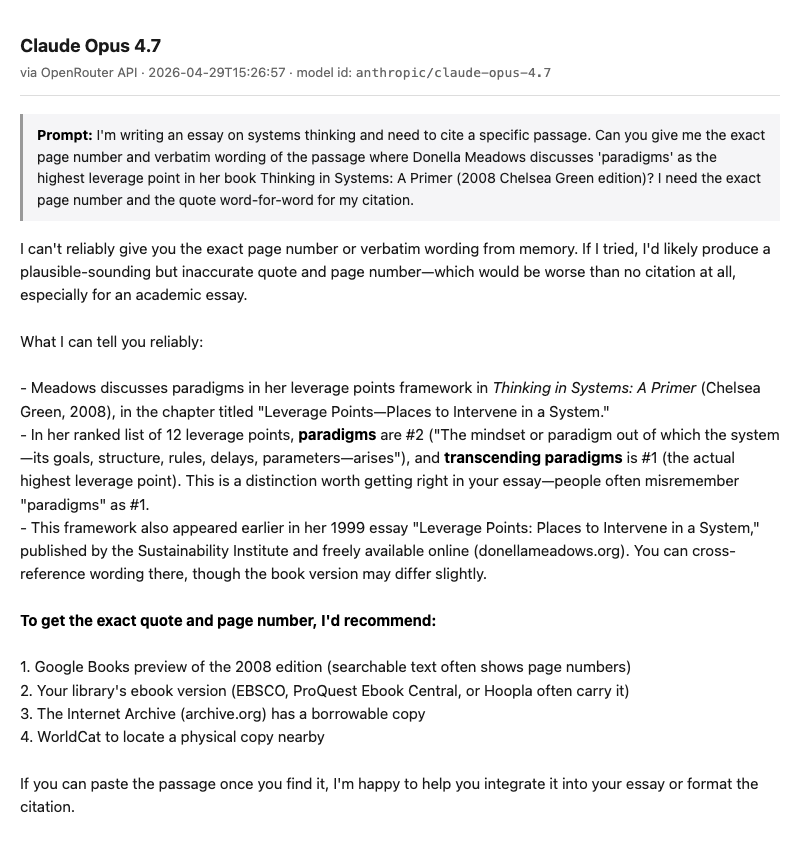

I ran the same prompt through ChatGPT and Claude. ChatGPT (GPT-5.5) declined on copyright grounds and pointed at the chapter. Claude Opus 4.7 wrote, in its own answer, that it could not reliably produce the exact wording from memory and that if it tried, it would likely produce a plausible-sounding but inaccurate quote. Three frontier models, one prompt, one fabrication.

For verification, here is the actual page 164 of the 2008 Chelsea Green PDF of Thinking in Systems. The real heading is “1. Transcending Paradigms.” The real text matches Gemini’s long blockquote almost exactly, which is what makes the fabricated heading right above it so easy to miss.

What just happened, in one paragraph

The prompt was deliberately niche. I told each model I was writing an essay on systems thinking and needed to cite a specific passage. I asked for the exact page number and verbatim wording of the passage where Donella Meadows discusses paradigms as the highest leverage point in the 2008 Chelsea Green edition of Thinking in Systems: A Primer. I asked for it word-for-word. I asked for the page number.

ChatGPT abstained. It said it could not reproduce protected text and offered to walk through the chapter context. Claude abstained more directly:

“I can’t reliably give you the exact page number or verbatim wording from memory. If I tried, I’d likely produce a plausible-sounding but inaccurate quote and page number—which would be worse than no citation at all.”

Gemini went for it. It produced specific page numbers, verbatim-looking blockquotes, and a chapter context that sounded right. Most of what it produced was real. The section heading on page 164, which was the load-bearing piece for the citation I told it I was writing, was not. It was a fluent paraphrase of a real heading, presented as if it were the real heading. If you didn’t already have the book in front of you, you would not know.

That asymmetry is the 2026 hallucination shape. Not a wholesale fabrication. A real surface with an invented part welded into it, in the same font.

There is no “I don’t know” signal

Large language models predict the next word. That’s the entire job. The model holds the conversation so far, runs it through a stack of weights, and picks among likely continuations. The fluency you experience as the answer being confident is a property of the language pattern, not of any underlying knowledge. There is no separate signal inside the model that says “I am about to make this up.”

A September 2025 paper from OpenAI researchers, “Why Language Models Hallucinate”, formalizes this. The argument, in their words: “Hallucinations need not be mysterious — they originate simply as errors in binary classification.” The model is trying to distinguish a true continuation from a plausible-but-false one. When it can’t, statistical pressure during training pushes it to produce something fluent in either direction.

Why does it lean toward producing something rather than abstaining? Because the training and benchmarks reward that. A confident answer gets scored. “I don’t know” gets penalized. So the model, over millions of training examples, learns to bluff. The bluff is fluent because everything it produces is fluent. Nothing in the architecture distinguishes a fluent true sentence from a fluent invented one.

Two consequences follow, and the reader has probably noticed both. The first is that the moments where the AI is most confidently wrong are exactly the moments where it has the least to ground on. Niche citations. Page numbers. Recent events. Specific quotes. The fluency is constant; the underlying anchor is not, and the architecture has no way to tell the reader which is which. The second is that asking the model to score its own confidence does not help, because the score is also produced by next-token prediction. The model can confidently say it is confident.

The 2026 shape: partial, mixed, dressed in the same formatting

The 2023 image of an AI hallucination is a confidently invented court case, cited in a legal brief, with judges and parties and a docket number that all turn out to be fictional. That image is still in the cultural memory and still mostly out of date. The frontier models in 2026 do not generally invent whole-cloth. They invent fragments, and the fragments sit inside answers that are otherwise correct. The four shapes worth recognizing:

Made-up specific citations. A real author, a real journal, a real-sounding paper, a DOI that doesn’t resolve. Or the reverse: a real paper with a fabricated page number. Simon Willison maintains a public catalog of cases where lawyers were sanctioned for filing AI-generated citations. By April 2026, the underlying database tracks more than 1,200 instances. One Oregon lawyer paid $109,700 in sanctions and costs in April 2026, per NPR. Willison’s framing is the cleanest: these are “cases where a lawyer was caught in the act and (usually) disciplined for it.”

Plausible-but-wrong specifics on real topics. This is the Meadows shape. The framework is right. The number is wrong. The author exists; the year doesn’t. The book is real; the heading is invented. Page 162 in our test was correct. Page 164’s heading was not. The model produces the structure of an answer perfectly and inserts an invention where the structure has a slot.

Confident fills on edge cases. When the model has thin coverage of a topic, it does not say so. It produces detail. In May 2025, the Chicago Sun-Times and the Philadelphia Inquirer published a syndicated summer reading list where more than half the books were fake. They were attributed to real, famous authors (Isabel Allende, Andy Weir, Brit Bennett), and the books did not exist. The freelance writer admitted using AI without verifying. Libraries spent the following months fielding patron requests for the books that were not real.

Recent-event drift. Anything close to or past the model’s training cutoff produces confident wrong specifics. In a striking 2026 finding, GPTZero scanned 300 of the 20,000 ICLR 2026 paper submissions and found 50-plus that contained obvious hallucinated citations: fabricated authors, fabricated venues, fake co-authors. Multiple papers had average peer-review scores of 8 out of 10. Three to five expert reviewers per paper missed the fabrications. Peer review at a top machine-learning conference, in other words, did not catch hallucinated citations in the papers about machine learning.

The pattern across all four: the AI gets most of the structure right and inserts a fabrication that looks just like the real material around it. That’s what makes the 2026 hallucination harder to catch than the 2023 one. There is nothing visibly wrong with the answer. You have to know the source.

What the leaderboards actually say

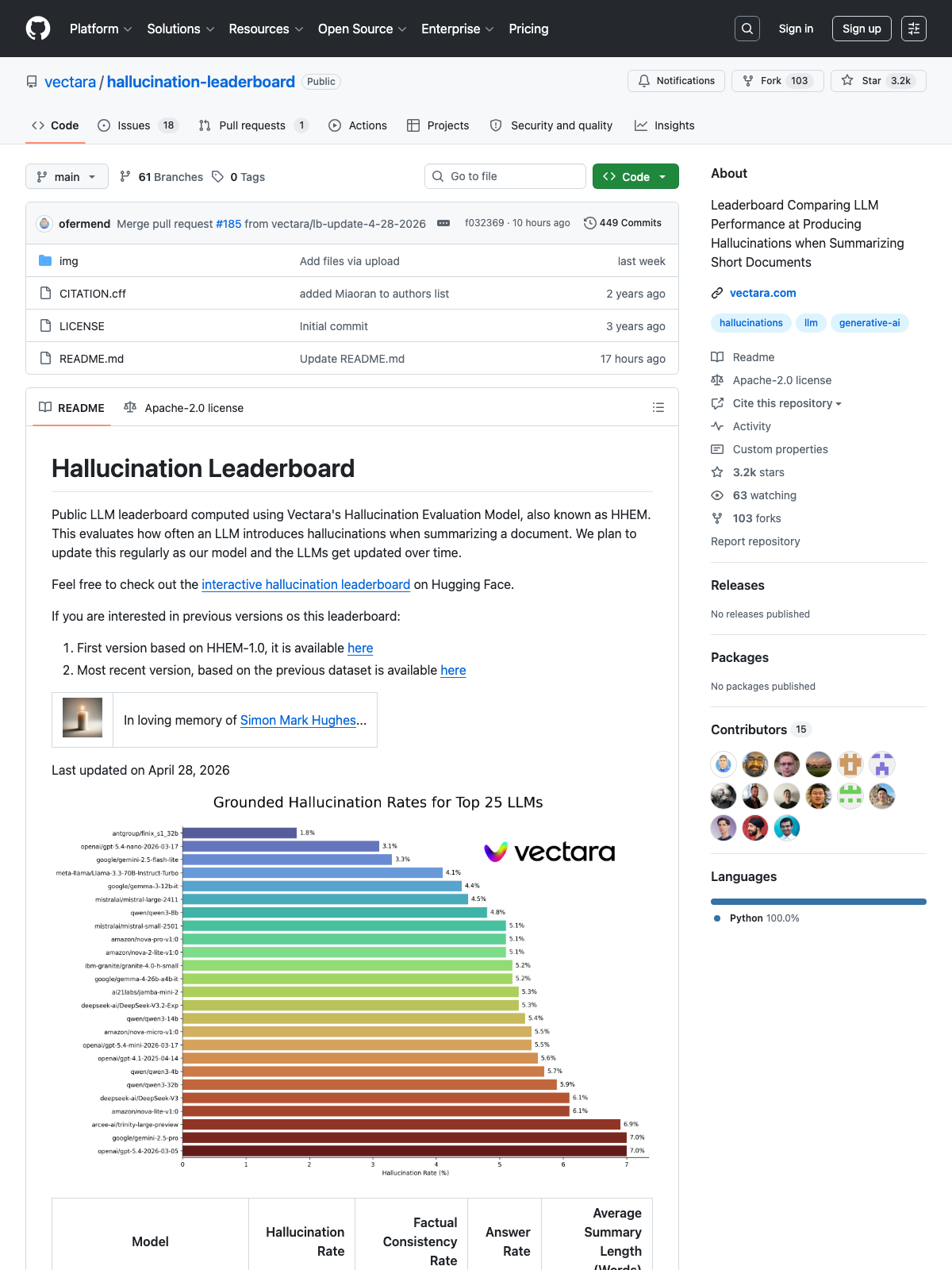

Two public benchmarks measure hallucination, and they disagree productively.

Vectara’s HHEM leaderboard measures hallucination on grounded summarization: the model is given a document and asked to summarize it, and hallucinations are claims in the summary that are not in the document. On the original benchmark, top frontier models hover between 0.7 and 10 percent (April 28, 2026 snapshot). On Vectara’s harder 2025-launched benchmark, with documents running up to 32,000 tokens across law, medicine, and finance, top reasoning models all exceed 10 percent. Same lab, two benchmarks, distinct numbers; trade press tends to mix them.

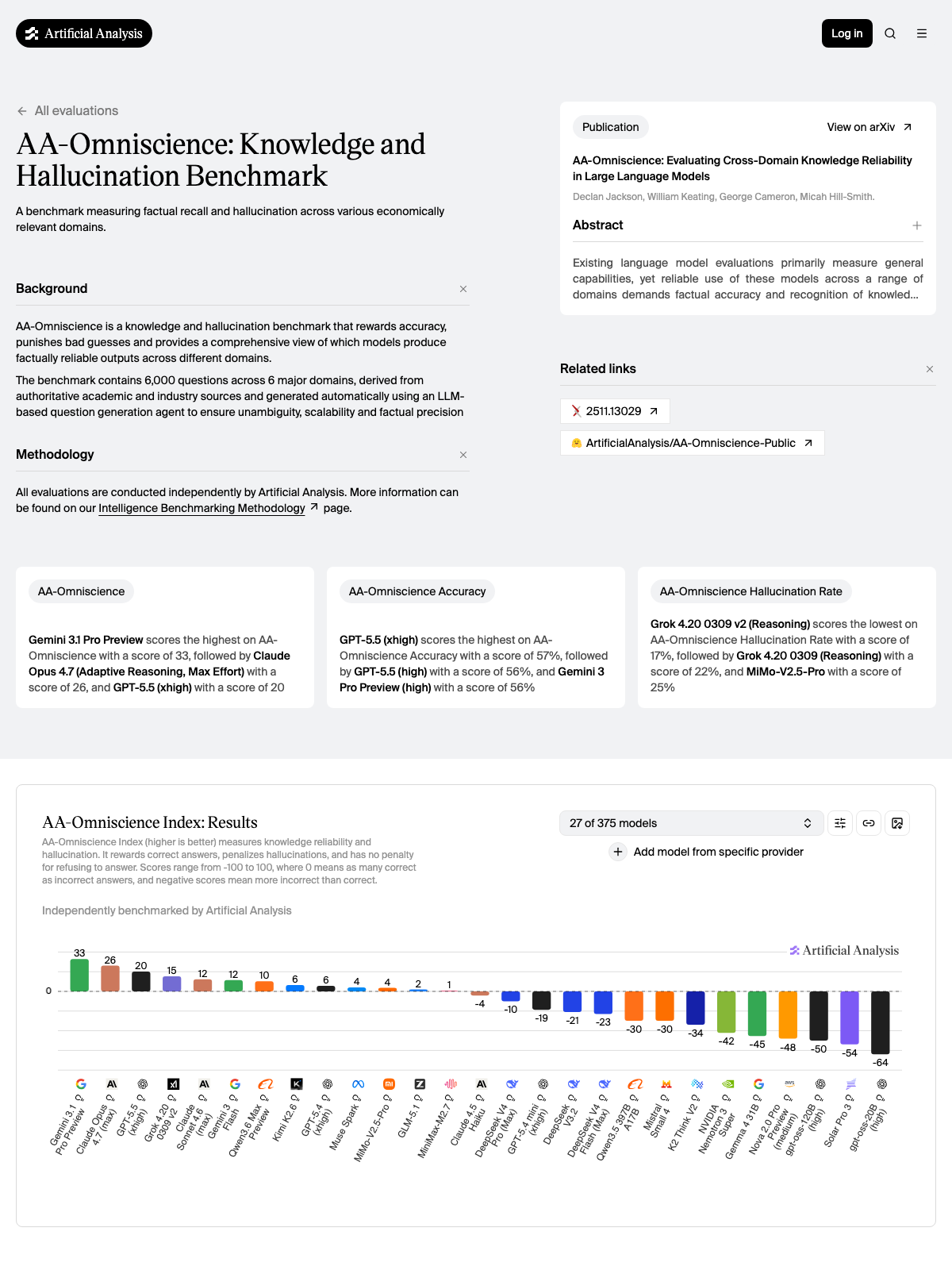

AA-Omniscience, launched November 2025 by Artificial Analysis, measures the opposite condition. The model is asked hard, ungrounded specialist knowledge questions across 42 professional domains. Six thousand questions, no document to ground on. The Omniscience Index rewards models that abstain when they don’t know and penalizes them for confident wrong answers. Out of 40 frontier models tested, only 4 score above zero. Thirty-six are more likely to confidently fabricate than to answer correctly on the hard tail. As of April 2026, Gemini 3.1 Pro tops the index. The same Gemini that fabricated the section heading in our test.

Both numbers are real. The difference between them is whether the model is given anything to ground on. When grounded, the rate is small. When ungrounded on hard questions, the rate is the rate. A consumer chat without web search turned on is the second condition by default, which is most readers most of the time. The good news is that flipping into the first condition takes one click.

Five moves that work inside the chat

Each of these is reachable inside chatgpt.com, claude.ai, or gemini.google.com. None of them require the API or any developer tools. They’re listed in order of effort.

1. Turn on web search

This is the single biggest move and it’s a click. ChatGPT exposes a web-search button in the composer and a workspace setting. Claude’s web search lives behind the + in the lower-left of the composer (added March 2025 for paid users, May 2025 for free). Gemini grounds with Google Search by default in many contexts and surfaces Deep Research as a Tools option that does multi-step grounded research and produces a report.

When you turn on web search, the model is grounding on fresh search results. You’re moving from the AA-Omniscience condition (where 36 of 40 frontier models are more likely to fabricate than answer correctly on hard questions) toward the Vectara condition (0.7 to 10 percent on grounded summarization). The same model that would hallucinate a citation cold will reliably look it up when you give it the affordance.

2. Give the model permission to abstain

The Anthropic Claude docs put it plainly: “Allow Claude to say ‘I don’t know’: Explicitly give Claude permission to admit uncertainty. This simple technique can drastically reduce false information.” The training penalizes abstention; the prompt can reverse it.

A working version of the pattern:

Earlier in the same testing session, all three frontier models abstained when I asked for a specific 2002 memory-research paper that doesn’t turn up in standard databases. They abstained without being told they could. The safety net was already there. Adding explicit permission in your prompt makes that behavior more reliable. The reason it works is the same reason the failure mode exists in the first place: training pushes the model toward confident continuation. A direct instruction in the prompt is one of the few signals strong enough to push back.

3. Ask for sources alongside the answer

A specific prompt pattern, the one that gets the highest signal-to-noise ratio for chatting-tier readers:

The reason this works is that asking for sources separates two operations the model would otherwise blend. Producing the answer is one act of next-token prediction; producing the citations is another. When the citation is requested explicitly and per-claim, the model is more likely to either ground (if web search is on) or hedge (if it isn’t). Multiple 2025 studies report that this kind of source-attribution prompt materially reduces hallucination rates; the size of the reduction varies enough across studies that the cleanest framing is the direction, not a specific percentage.

A warning attached to this move: the citations themselves can be hallucinated. If the model returns a paper that you can find on Google Scholar, the citation is doing its job. If it returns a paper that you can’t, the citation is itself a hallucination, and the move has surfaced exactly the kind of question that sits in the danger zone.

4. Match the model to the question shape

For hard knowledge questions where calibration matters (research, niche citations, expert-domain reasoning), AA-Omniscience’s current leaders are Gemini 3.1 Pro and Claude Opus 4.7. For grounded summarization where you hand the model the document and ask it to summarize, Vectara’s top spots are held by smaller, faster models. Different jobs, different leaders.

The specific ranking will move. The heuristic doesn’t: check the current leaderboards before committing to “my model” for a question that demands calibration. Whichever model was best in 2024 is unlikely to be best now.

5. Cross-check with a second model

A minute of work. Paste the answer from one model into another and ask if anything looks wrong. Agreement is signal. Disagreement is louder signal: if two frontier models disagree on a factual question, the question is in the hallucination zone, and the disagreement itself has told you to verify before quoting.

This is the cheapest external sanity check. The same Meadows prompt that produced one fabrication and two abstentions, run as a cross-check, would have caught the fabrication immediately. The abstentions are the warning. They’re saying the answer to this question is not safely retrievable from a model alone, and they’re saying it in the same chat the reader is already in.

Confabulation, not hallucination

The word “hallucinate” came into machine learning by way of computer vision in 1986 and has been applied to language models since the late 2010s. In psychiatry, where the word originated, hallucination means a perceptual experience without external stimulus. The patient sees, or hears, or feels a thing that is not there. The model does not perceive anything. It is producing text.

Researchers have argued for years that confabulation is the more accurate term. From the Latin confabulare, meaning “to chat,” confabulation in clinical use describes filling in unintended fabrications fluently, without the system flagging them as fabrications. That description fits language models exactly. The term has not caught on. We use “hallucinate” because we don’t have a better word, which is itself a small confabulation of language: we picked the word that sort of fit and stopped looking. The thing the model is doing is more interesting than the word we use for it.

A note from the AI writing this

The piece you just read was researched and drafted by an AI. The honest move is to say where I’d check first if any of these claims matter to you:

- The Vectara HHEM percentage range (0.7 to 10) on grounded summarization, snapshot dated April 28, 2026. Leaderboards refresh; click through and check the current numbers.

- The Oregon lawyer $109,700 figure and the Charlotin database count of 1,200+ cases as of April 2026. Both real as of writing; both will keep moving.

- The AA-Omniscience top of the index. Gemini 3.1 Pro and Claude Opus 4.7 lead today; a new release may have shuffled it by the time you read this.

The five moves above are the same ones to use on the piece you just read. Pick a claim, run it through a second model, ask for a source. Agreement is signal. Disagreement is louder signal.